Are your AI coding workflows actually working?

AX measures what matters — cost per PR, iteration depth, CI success, and more metrics that tell you if your agentic coding is getting better.

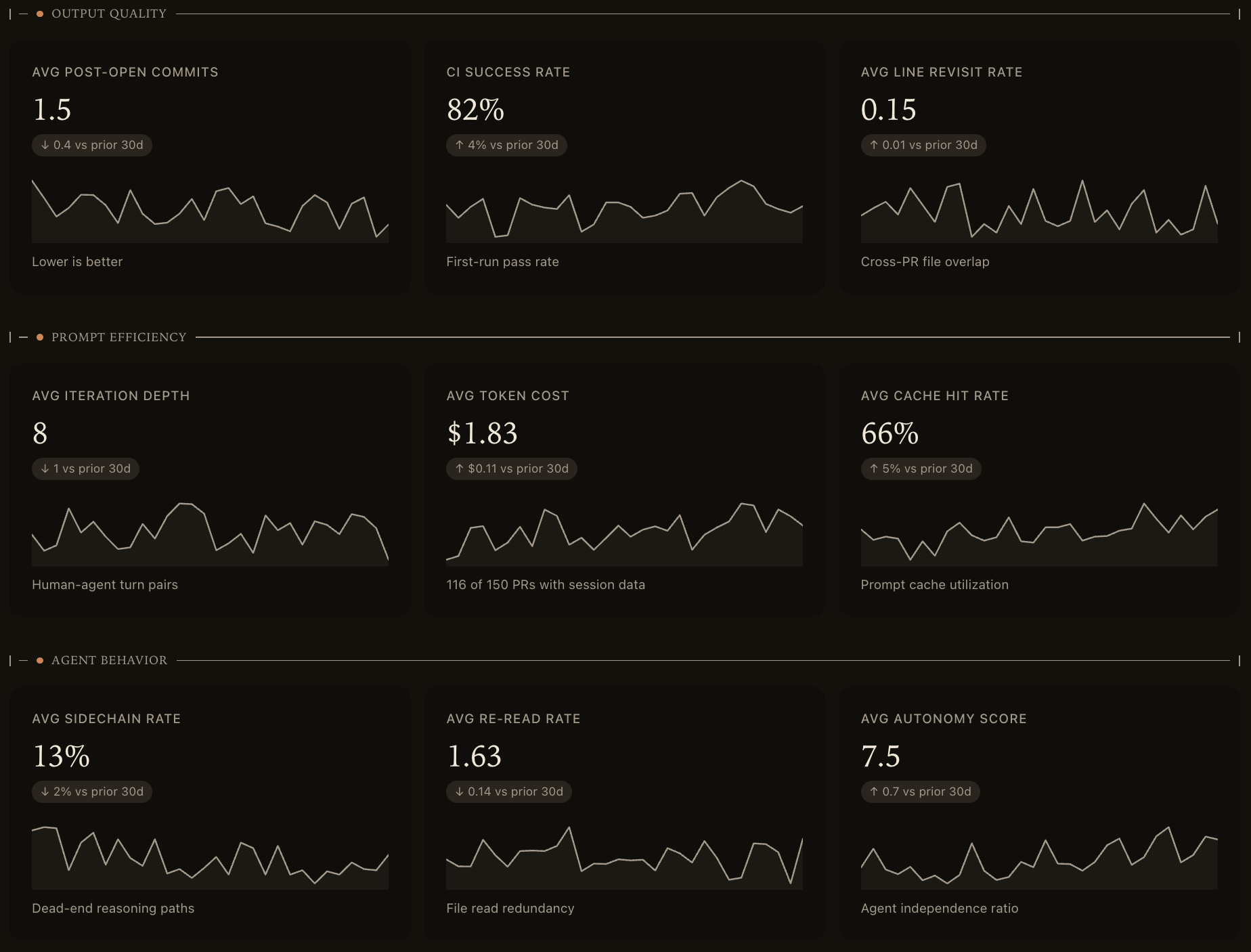

11 metrics across 3 categories

Understand every dimension of your AI coding workflow

Output Quality

Is the code your agent produces actually good? CI success, post-open commits, review cycle time, and line revisit rate.

Post-Open CommitsCI Success RateLine Revisit RateReview Cycle Time

Prompt Efficiency

Are you getting results with fewer interactions and less cost? Token spend, iteration depth, and cache utilization.

Iteration DepthToken Cost per PRCache Hit RateUnmerged Token Spend

Agent Behavior

How well is the agent navigating problems? Backtracking, redundant reads, and autonomy.

Sidechain RateRe-Read RateAutonomy Score

How it works

Three steps, five minutes

1

Create an account

Sign in with GitHub to create your org. Invite your team and get set up in seconds.

2

Connect GitHub

Install the AX GitHub App on your org. We receive webhook events and backfill up to 90 days of PR history.

3

Connect the CLI

Install the CLI and run ax init to link your local environment. AX hooks into Claude Code's session lifecycle automatically.

brew install acroos/tap/ax && ax initFree to start, scales with your team

Core metrics and GitHub integration included on the free plan. Upgrade for unlimited team members and data export.